The most common mistake teams make when setting up AI prompt tracking is treating prompts as keywords, which leads to generic queries that fail to capture real user intent and result in poor data quality.

Unlike traditional SEO tracking, improper AI prompt setup often leaves marketing teams blind to how AI engines like ChatGPT and Perplexity discuss their brands. These mistakes compound quickly, and before you know it, you're making strategic decisions based on incomplete visibility.

"Prompt tracking is a brand intelligence tool, not a performance marketing metric. It tells you where you appear, how you're positioned, what competitors are out there, and where your gaps are."

Difference between Traditional SEO Tracking and AI Prompt Tracking

| Aspect | Traditional SEO Tracking | AI Prompt Tracking |

|---|---|---|

| Primary goal | Rank in search engines | Appear in AI-generated answers |

| Data source | Search engine results | AI model outputs |

| Measurement unit | Keywords and rankings | Prompts and AI mentions |

| Optimization focus | Search ranking signals | Conversational intent alignment |

| User journey | Search → click → website | Ask AI → direct answer |

| Competition type | SERP positioning | AI answer inclusion |

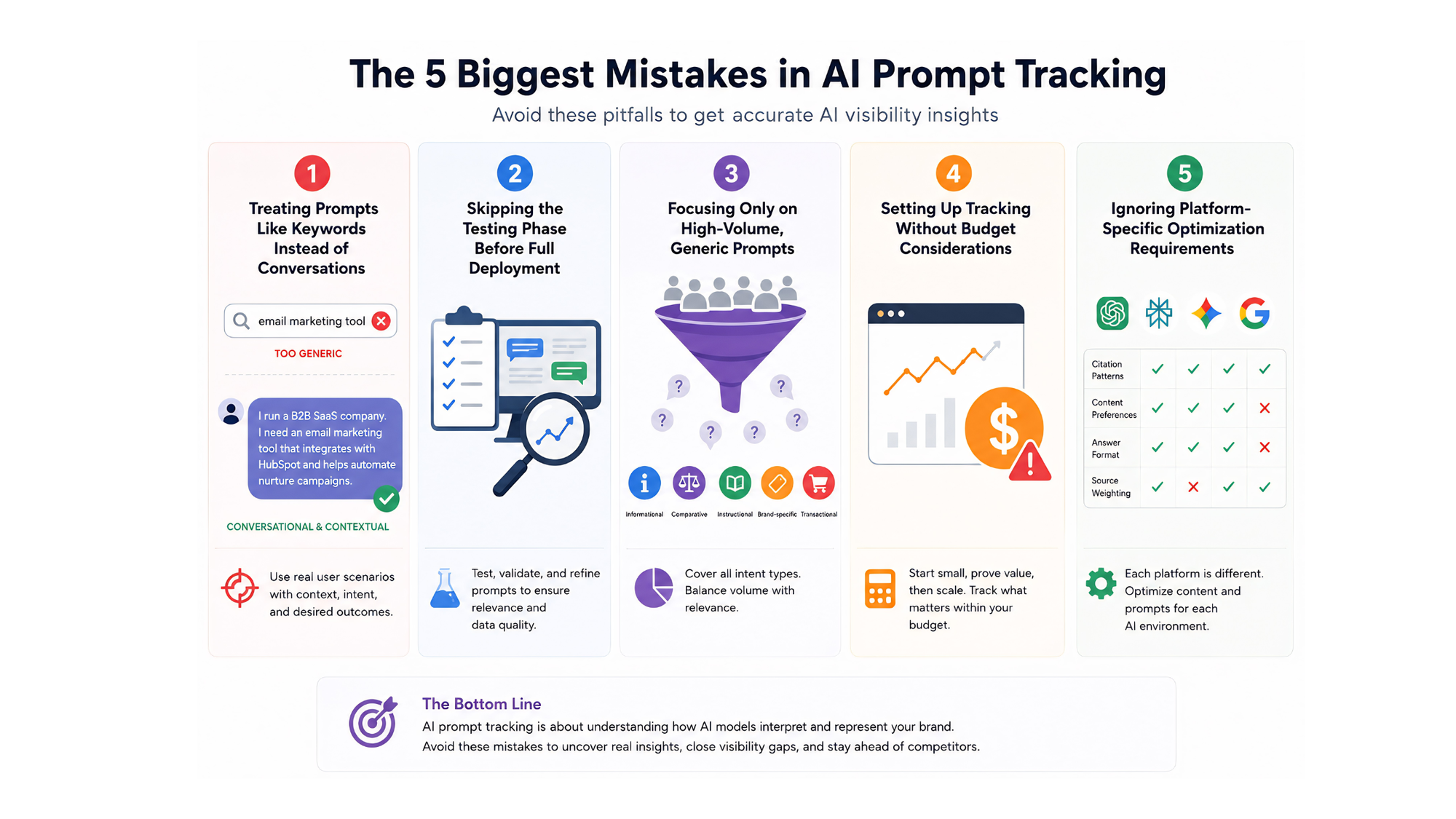

1.Treating Prompts Like Keywords Instead of Conversations

One of the most frequent and costly mistakes when setting up AI prompt tracking is using overly simplistic prompts.

A prompt like "e-mail marketing tool" is so generic that LLMs have no idea who is asking, what their actual problem is, or which solutions would be relevant. The result is a broad, informational answer that mentions few or no brands.

To avoid this mistake, write like a real user. Prompts should reflect how a customer would describe their situation to an AI assistant, not how a marketer would search on Google.

The goal is to simulate the end of a real conversation: context has been explained, and now the user is asking for the best solution. The more specific you are, the more likely your brand will appear naturally when it should.

Examples of Poor vs. Effective Prompts

Key Takeaway: Generic prompts produce generic answers with minimal brand mentions. Contextual prompts that mirror real customer conversations generate specific recommendations where your brand can appear naturally. Next, you'll want to make sure those prompts are actually worth tracking in the first place.

2.Skipping the Testing Phase Before Full Deployment

Another common mistake is jumping straight into tracking a list of prompts without testing them first.

If a prompt doesn't consistently generate relevant, helpful answers in ChatGPT, Perplexity, or other target platforms, it shouldn't be in your tracking setup. Tracking bad prompts wastes resources and pollutes your dataset with information that doesn't reflect real user intent.

The Testing Framework

Before adding any prompt to your permanent tracking setup, run it through this validation process:

- Multi-platform testing: Platforms like Indexly allow teams to track prompts across multiple AI engines, locations, and markets, making it easier to compare visibility across ChatGPT, Perplexity, and Google AI systems.

- Relevance check: The whole point of prompt tracking is understanding whether your brand shows up when it should. Prompts must be specific enough that your solution would be a natural recommendation.

- Response quality assessment: Does the AI provide actionable recommendations? Are competitors mentioned? Is the context appropriate for your target persona and their stage in the buying journey?

- Citation analysis: Note which sources the AI cites and whether your content appears in the retrieval process, even if your brand isn't mentioned directly in the text.

Key Takeaway: Testing prompts before deployment prevents wasted resources and ensures your tracking setup reflects genuine user interactions that could drive business value.

For more detail on implementation, check out our guides on best prompt tracking tools, AI prompt tracking fundamentals, and prompt research strategies for AI visibility.

3.Focusing Only on High-Volume, Generic Prompts

A critical mistake teams make when deciding what to track is ignoring intent diversity and only tracking "best X" queries.

Most brands over-index on high-level comparative prompts and completely ignore the other types of questions their customers are asking AI assistants.

The Intent Diversity Problem

There are five core prompt types that map to the customer journey: informational, comparative, instructional, brand-specific, and transactional. Teams typically focus exclusively on comparative prompts like "best marketing automation software" while missing valuable opportunities across other intent categories. It's like only measuring paid search and ignoring organic—you're missing most of the picture.

A tracking strategy should cover all five intent types to capture a complete view of brand visibility.

Prompt Type | Example | Business Value | Tracking Portfolio Allocation |

|---|---|---|---|

Informational | How does email automation work for B2B companies? | Brand authority, thought leadership | 20% |

Comparative | HubSpot vs Mailchimp for growing startups | Direct competitive positioning | 40% |

Instructional | How to set up lead nurturing campaigns in 2026 | Solution positioning, expertise | 15% |

Brand-specific | What are the alternatives to [your brand]? | Competitive defense | 10% |

Transactional | Which email platform should I buy for my agency? | Purchase intent capture | 15% |

A strong prompt tracking setup uses a specific ratio of prompts. Long, standard prompts (70–80%) should be your core tracking set. These should contain full context: persona, company type, pain point, and a solution-oriented question. These prompts reflect real user journeys in LLMs and give you the most comparable data across competitors.

Avoiding the "Best Of" Trap

Over-reliance on "best of" prompts is increasingly risky.

Recent data shows that queries asking for "best" and "top" recommendations were the primary prompts where Gemini began to focus less on external sources, with citations for "best of" listicles declining by 40%. That's a significant shift that most teams haven't noticed yet.

Platforms like Indexly help brands understand how AI engines prioritize different prompt types, enabling teams to build comprehensive tracking strategies that go beyond simple comparison queries.

Key Takeaway: Diverse prompt portfolios capture the full customer journey across AI platforms, while over-focusing on "best of" prompts misses 60% of potential brand mention opportunities. With the right prompts in place, your next consideration is the practical matter of budget and tools.

4.Setting Up Tracking Without Budget Considerations

A practical mistake is building an overly ambitious tracking plan that isn't sustainable. It's best to start with 20-40 prompts, run across 2-3 AI models, and track for at least 30 days before drawing conclusions.

Prompt tracking costs scale with volume, so keeping your initial setup focused and intentional is key to proving value before you ask leadership for a bigger budget.

Budget-Conscious Platform Selection

If you're starting from zero and have a limited budget, platforms like Otterly AI ($29/mo) or Knowatoa ($59/mo with a free audit) provide genuine tracking capabilities with the lowest financial commitment, allowing you to establish a baseline without significant investment.

Free and Low-Cost Options

Budget-conscious teams can start with AI visibility tracking without a major financial commitment. Some free tools offer genuine utility with 8-model coverage without requiring premium tiers. For example, Promptmonitor Starter offers tracking across 8+ LLMs, publisher contact extraction, AI crawler analytics, and a 7-day free trial. This is enough to validate whether prompt tracking makes sense for your business before committing real money.

Key Takeaway: Effective AI prompt tracking doesn't require enterprise budgets. Starting with focused, budget-conscious setups allows teams to prove ROI before scaling investment. Once you have a budget and platform in mind, the final piece is understanding how to optimize across different AI engines.

5.Ignoring Platform-Specific Optimization Requirements

A final common mistake is assuming all AI platforms behave the same way. They don't. For instance, ChatGPT enables its search feature on just 34.5% of queries as of February 2026, meaning most of its responses still rely on its training data alone. Furthermore, ChatGPT only cites 50% of the pages it retrieves, and 88% of the URLs that end up being cited are taken directly from search results. This matters because it fundamentally changes how you should optimize.

Platform Behavior Differences

Each AI platform has distinct citing patterns and content preferences that must inform your tracking setup and content strategy:

- ChatGPT specifics: The top 5 metrics that consistently drive LLM citations are domain authority, high-quality backlinks (from DA 60+ sites), mentions in "best" listicles, total number of backlinks, and unique referring domains.

- Gemini changes: Gemini is showing fewer citations, dropping from 99% in February to 76% in March. It mentions brands in 83.7% of responses but only generates a citation link 21.4% of the time.

- Google AI variations: AI Overviews and AI Mode cite different sources. Data shows that only 13.7% of citations overlap between the two Google AI features, requiring separate optimization efforts.

- Citation positioning: Content structure matters immensely. 44.2% of all LLM citations come from the first 30% of an article's text (the introduction), while only 31.1% come from the middle section.

Multi-Platform Tracking Strategy

If you need to monitor the same prompt across multiple countries (e.g., US/UK/global), you need an AI visibility tracking tool that supports multi-engine prompt runs, location segmentation, and dashboards that allow you to compare markets side-by-side.

Why This Matters for Setup

AI mentions are not measurable by default—which is why only 14% of marketers currently track them. A modern toolkit is required to see when ChatGPT, Perplexity, or Gemini mention your brand by name in an answer, with or without a direct link. Brands using platforms like Indexly can track these platform-specific nuances and adjust their content strategy accordingly, ensuring consistent visibility across different AI engines with varying citation behaviors.

Key Takeaway: Each AI platform has unique citation patterns and content preferences. Generic tracking setups miss these nuances, leading to incomplete visibility data and missed optimization opportunities.

Conclusion

AI prompt tracking only works when it moves beyond keywords into real conversational context. Most failures come from overly generic, untested prompts that don’t reflect buyer intent.

The core issue is assuming AI behaves like search. It doesn’t—it interprets intent, roles, and context, which makes shallow prompts unreliable.

The better approach is AI visibility intelligence: use conversational prompts, validate them, cover intent stages, control scale, and account for platform differences.

Done right, it shifts tracking from reporting to insight—showing not just if a brand appears, but why and where it’s missing.

At scale, this requires infrastructure for multi-model tracking and citation analysis. Indexly helps operationalize this by mapping brand visibility across AI answers, competitors, and intent-driven prompts.

FAQs

What is the most common mistakes people make with AI prompt monitoring?

The biggest mistake is tracking only brand mentions without understanding intent. Many teams focus on “Did we appear?” instead of “Why did we appear and in what context?”

How to set up AI Prompt Tracking with limited budget?

If you're starting with a limited budget, use entry-level tools like Otterly AI ($29/mo) that offer genuine tracking capabilities with a low financial commitment.

What should I test before setting up AI prompt tracking?

Before adding a prompt to your tracking system, you should test it two to three times across all LLMs you plan to monitor (e.g., ChatGPT, Perplexity, Google). During testing, check that the prompts generate relevant, helpful answers and that they are specific enough that your solution would be a natural recommendation. Untested prompts waste time and skew your data, so this validation step is crucial.

How to choose an AI prompt tracking tool?

Choose a tool that tracks prompts across multiple AI models, supports location-based and competitor tracking, and shows brand mentions with context (not just counts). It should also allow prompt testing, filtering by intent, and integration with your SEO or analytics stack for performance comparison.

Why do brands track prompts in ChatGPT?

Brands track ChatGPT prompts to understand how often their name appears in AI responses and in what context. Since standard analytics can’t measure AI-generated mentions or competitor inclusion, prompt tracking helps reveal brand visibility, positioning, and missed opportunities in AI-driven search.

What is the best AI prompt tracking platform for digital agencies managing multiple clients?

The best platform is Indexly. It supports multi-client dashboards, separates data by brand, and allows scalable prompt libraries across accounts. Agencies should prioritise tools with multi-model coverage (ChatGPT, Perplexity, Gemini), white-label reporting, and the ability to compare AI visibility across clients in one place.